The future of health needs real insights.

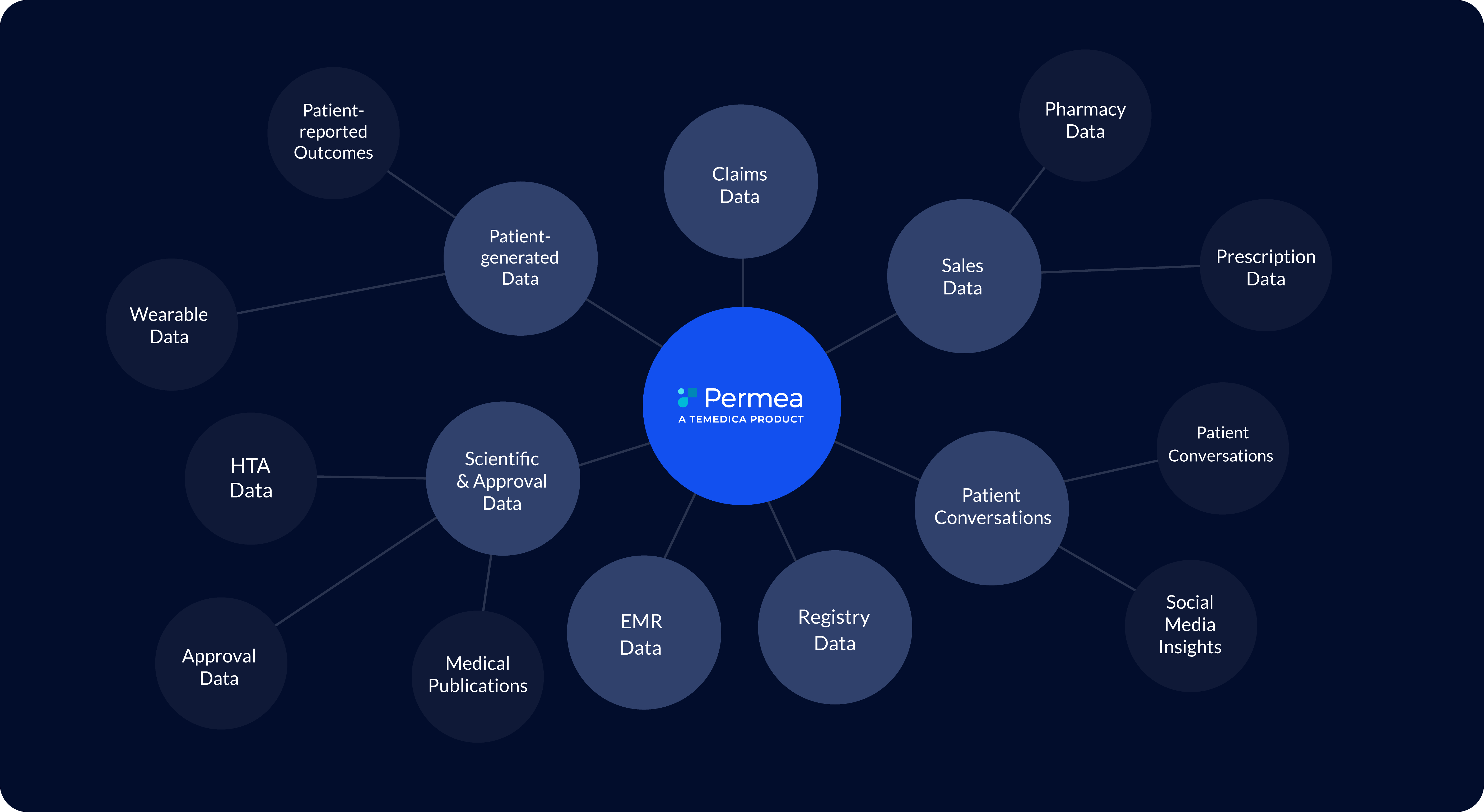

At Temedica, we run Europe's leading ecosystem for real-world insights in the healthcare sector. We de-silo and combine anonymised health data to provide a 360° patient understanding to all stakeholders of the healthcare system.

Explore the value of real-world evidence with Permea that turns anonymised health data into transformative insights for the life sciences.

Personalised health needs real insights.

We believe that every patient deserves personalised healthcare. However, providing truly personalised solutions requires an in-depth understanding of patients.

At Temedica, we work with patients, life science companies, pharmacies, healthcare providers and other critical healthcare stakeholders to aggregate data which enables a comprehensive real-world understanding. Within the most stringent technical and ethical framework, we employ artificial intelligence to offer all stakeholders within the healthcare system easy access to the latest and most relevant health insights.

Insights at your fingertips.

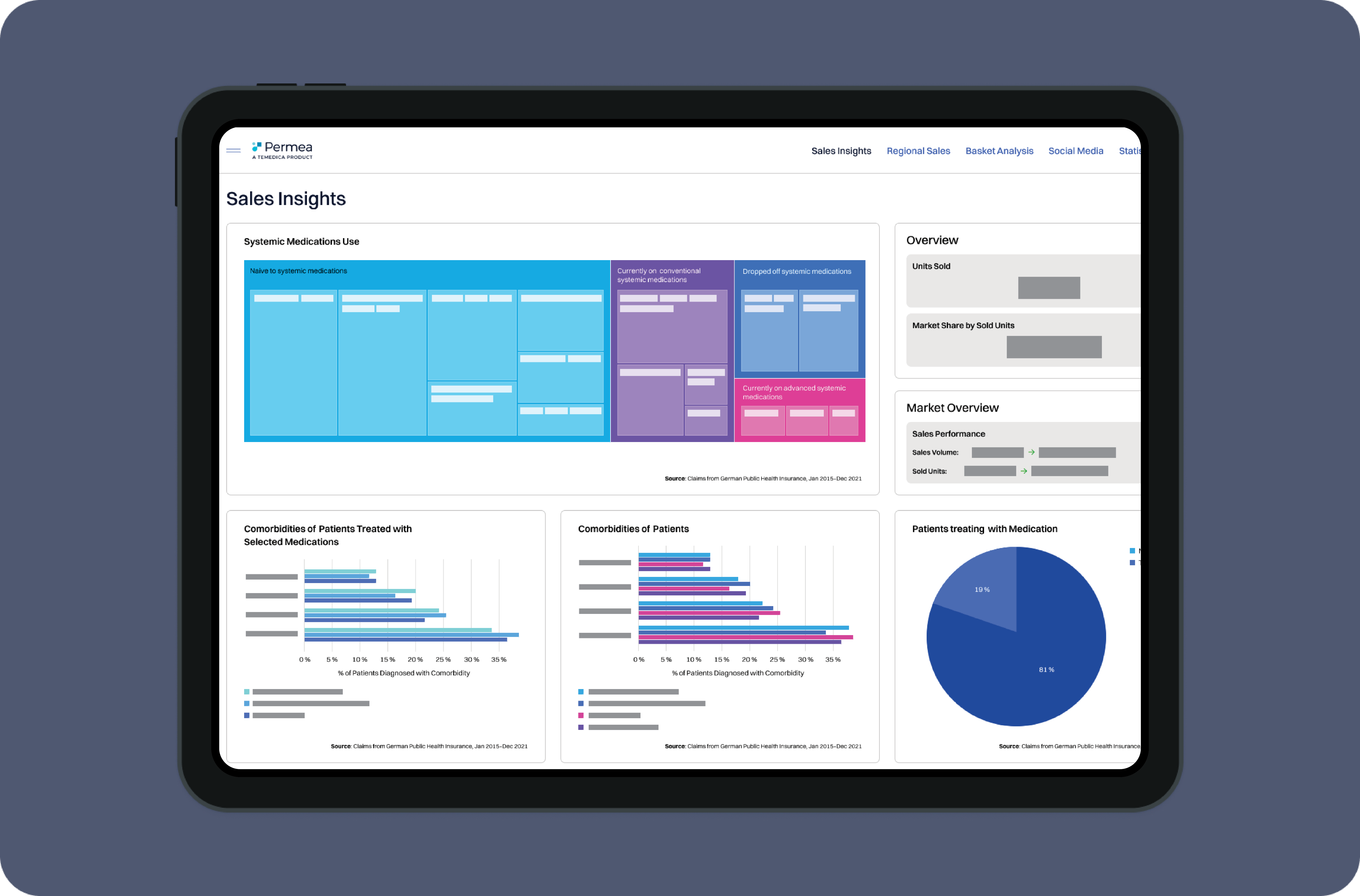

PERMEA MONITOR

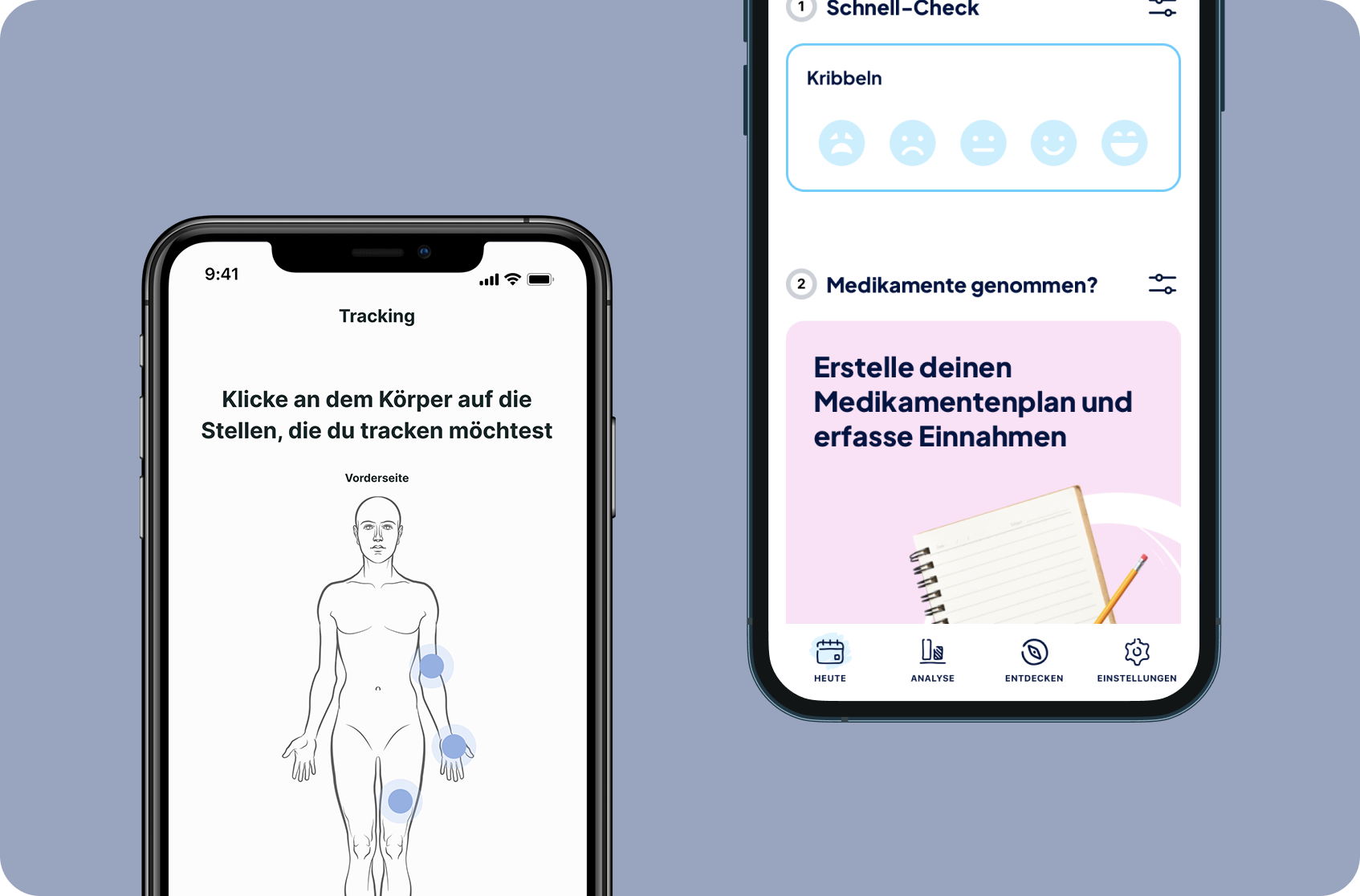

ADD ON DIGITAL COMPANION

Permea delivers real-world insights in a highly intuitive dashboard that can be customised to address specific requirements of the pharmaceutical enterprise.

Develop highly effective and tailored marketing and sales strategies based on real-world evidence. Characterise your target market by analysing disease and treatment patterns, identify unmet needs and treatment gaps.

Optimize your brand positioning, monitor marketing performance, and tailor your communication to effectively engage with HCPs and pharmacists.

Get a competitive edge by understanding the patient journey to develop targeted marketing strategies, and patient engagement initiatives that address specific needs. Identify the factors that drive patient decisions and use this knowledge to activate patients for successful treatment initiation, compliance, and therapy switches.

Unlock the potential of direct patient access and leverage Patient-Reported Outcomes (PROs) as a dynamic data source that informs on patient behaviors and outcomes. Enhance patient activation through treatment information, empowering patients to proactively inquire about your treatment options.

Patient-centered and engaging digital companion for all patients in a specific therapeutic area. Involvement & motivation of patients to share their data.

Generation of longitudinal real-world data - addressing patients' behaviours and outcomes.

Detailed information about existing & new treatments, activating patients to explicitly ask for partner’s treatment options.

What our partners say

"As a collaboration partner with Temedica, the developers of the Orya app for psoriasis patients, we are excited about Temedica's novel insights. These have the potential to take today's approach to medicine to a new level - for example, by combining real-world insights from digital tools with various other intelligent sources of information to sustainably improve patient care."

"Temedica helped us to understand the real-world needs of our patients, providing us with the opportunity to listen to what they have to say and take their opinions into consideration."

"Based on my experience in Real-World Evidence research, I like Temedica's innovative approach to facilitate a prompt, comprehensive, and precise understanding of patient journeys, disease burden, and health outcomes, particularly in the field of multiple sclerosis."

"Temedica's patient-centric and proactive approach to healthcare seamlessly aligns with my own. Their extensive ecosystem, rich with Real-World Insights, proves indispensable in my work on 'Digital Twins'. This allows for personalized diagnoses and a deeper understanding of disease phenotypes. Together with Temedica, we're transitioning from lengthy texts to concise, data-driven narratives, thereby promoting patient understanding and transparency."

"The expansive data landscape of the Temedica Ecosystem is a treasure trove for scientists to produce actionable insights for clinical practice."

"With a chronic disease, the right support tool counts. Temedica's Multiple Sclerosis Companion is it for me. More than just reminders and tracking, it is my personal compass through the highs and lows of my condition. Personalized guidance and symptom insights, and a sense of never being alone on this journey. It's not just an app, it's a game-changer for all of us in the chronic community."

“Thanks to Temedica’s health insights, we gain a profound and precise understanding of care realities and patient journeys of individual patient cohorts. This knowledge enables us to plan our initiatives with greater precision and to focus even more on the individual needs of patients."